Your Alexa Speaker Can Be Hacked With Malicious Audio Tracks. And Lasers.

· Updated Jan 2020· 682 reads

Sponsored

Can Alexa Speaker Be Hacked?

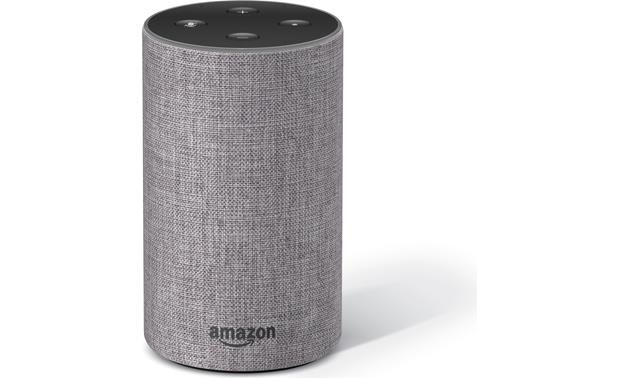

In this digital era, our AI assistants can track our every move and keep an eye on us quite effectively. This is quite alarming, especially since hackers can easily gain access to Alexa Speaker and others. Recent research tells us of two ways in which malicious actors exploiting the assistant’s weaknesses. The first adversarial attack targeted the image recognition system. The image classifier was tricked into understanding a 3D-printed turtle vs. a rifle. Another example included the image of a lifeboat with a small visual noise at one corner. This made Alexa classify the picture as a Scottish terrier. While these wouldn’t trick the humans at all, the AI assistants were highly confused. After this, the researchers attempted to prove that this vulnerability could be exploited via audio as well. [caption id="attachment_8380" align="aligncenter" width="1200"] Echo Range[/caption]

The researchers came up with a special audio cure that would not allow Alexa to get activated. In other words, when the musical cue is played out, Alexa would not hear it being called. In fact, Alexa only responded 11% of the time in this situation when opposed to 80% of the time when other music is playing and 93% of the time when there is no music or any other audio in the background. With this, the team successfully made Alexa believe that a positive was actually a negative. Now, they are trying to induce a false positive, meaning that there would be no Alexa wake word and the assistant could still wake up. This makes more malicious uses possible.

Echo Range[/caption]

The researchers came up with a special audio cure that would not allow Alexa to get activated. In other words, when the musical cue is played out, Alexa would not hear it being called. In fact, Alexa only responded 11% of the time in this situation when opposed to 80% of the time when other music is playing and 93% of the time when there is no music or any other audio in the background. With this, the team successfully made Alexa believe that a positive was actually a negative. Now, they are trying to induce a false positive, meaning that there would be no Alexa wake word and the assistant could still wake up. This makes more malicious uses possible.

Attacking With Lasers

One team of researchers from Carnegie Mellon worked with audio cues and potential attacks. Another team from Japan and University of Michigan started work to understand how lasers could play a role here. They could help malicious users gain control of your smart home speakers, such as Alexa Echo. The U.S. Defense Advanced Research Projects Agency (DARPA) funds this project in part. The team show how Alexa speakers and other smart speakers could be hacked without any audio. As long as a line of sight access was available to the device, they could hack the system. [caption id="attachment_8381" align="aligncenter" width="620"] Amazon Echo[/caption]

Using a flickering laser could let the voice assistant and the smart speakers understand commands. The microphone understands us by basically picking up the changes in the air pressure. When a laser light’s intensity pattern is in the same way, there is a chance that the microphone will respond to the ‘noise’. This could be potentially highly dangerous. For instance, an attacker to record a command and encode that into a laser signal. When the Alexa speaker hears it, it would carry out the command. Although the hackers would need to be somewhere near, the fact that it could be done by staying outside one’s house make it alarming.

Amazon Echo[/caption]

Using a flickering laser could let the voice assistant and the smart speakers understand commands. The microphone understands us by basically picking up the changes in the air pressure. When a laser light’s intensity pattern is in the same way, there is a chance that the microphone will respond to the ‘noise’. This could be potentially highly dangerous. For instance, an attacker to record a command and encode that into a laser signal. When the Alexa speaker hears it, it would carry out the command. Although the hackers would need to be somewhere near, the fact that it could be done by staying outside one’s house make it alarming.

Taking Care of the Vulnerabilities

Of course, all connected devices are potentially vulnerable to attacks. The scary part here is that users don’t seem to understand the magnitude of the issue. This is apparent in the way that most of us are not ready to let the smart devices go as we would lose the convenience that we have become accustomed to. Voice-assisted tech is gaining popularity all the time. One of the ways to make it safe for use is by taking these research examples seriously. Companies manufacturing and designing these devices and systems need to take care to patch these vulnerabilities.Sponsored